3 ChatGPT Music Prompts for Generating Chords and Lyrics

OpenAI’s latest chatbot, ChatGPT, has been stirring up controversy online. The depth and accuracy of ChatGPT’s text generation is shocking even to those who have been following the latest developments in AI. You can jump to the 3 types of music prompts we found most interesting, or keep reading to learn more.

A new AI Music DAW called WavTool just dropped and it’s powered by GPT-4. Give it any type of instructions, from composing to adjusting audio levels and sound devices, and the software will do its best to deliver. We interviewed the company’s founder and share prompts you can use with the app to get more value from it.

In 2022, generative image services like Dalle-2, MidJourney and Stable Diffusion proved that artificial intelligence could create realistic imagery in a matter of seconds. Concept artists who may have been hired to sketch out characters and scenes for a creative director are now easily replaced.

In 2022, generative image services like Dalle-2, MidJourney and Stable Diffusion proved that artificial intelligence could create realistic imagery in a matter of seconds. Concept artists who may have been hired to sketch out characters and scenes for a creative director are now easily replaced.

For music producers, the closest thing is AudioCipher, the text-to-MIDI music plugin. On January 26th 2023, Google published a paper describing an upcoming text-to-music generator named MusicLM.

So what does all of this mean for musicians? Machine learning algorithms can already produce any type of imagery from a prompt. It’s not going to be long until neural networks start generating musical pieces that sound like they were created by a human.

“MIDI keyboard in a recording studio” by MidJourney produced in <1 minute

OpenAI already has a history of publishing music generation tools like MuseNet and JukeBox. To date, these applications have been more of a curiosity than a real threat. Neither of them achieved realistic, high fidelity music. This is partly because neural networks have difficulty producing long-term structures like a full song.

MuseNet allows users to export MIDI files based on broad parameters like genre and artist. Jukebox took this one step further and started producing complete audio files with vocals, lyrics, and full instrumental arrangements.

ChatGPT Music Generation

In December 2022, a number of music producer influencers started releasing meme videos of famous rappers using ChatGPT and another free tool called UberDuck. This playful technique is intended for fun and parody – it should not be used to seriously imitate the artists. Here’s how it works:

- Users come up with a lyric prompt for ChatGPT, like “write a lyrical verse in the style of [artist] about [topic] ”

- Find a section of the lyric output that you like and plug it into UberDuck

- Export the audio from Uberduck and bring it into your DAW

- Use an autotune plugin to apply a melody. If you’re looking for a free option, try GVST’s GSnap

That’s the most popular ChatGPT music workflow that we’ve found. But people have run some other interesting experiments, so we’ll share those next.

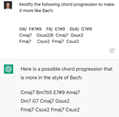

Independent researchers have already started experimenting with ChatGPT to generate text-based suggestions about how to improve on existing chord progressions. A user on Twitter, Jeffrey Emanuel, published several examples. Here are three of our favorites.

ChatGPT Chord Progressions

Emanuel used the prompt “modify the following progression to be more like Bach” and provided a text-based chord chart. These results may be entertaining, the truth is that ChatGPT’s natural language processing is not multimodal. This means that it has not been trained on music and it can’t form honest opinions about aesthetic improvements.

A trained composer would never mistake ChatGPT’s progression for Bach’s music. The final chord is a dead giveaway, because Bach would not end a composition on a sus2 chord. It would have been too dissonant and unresolved for the end of a piece during the Baroque era. But it’s interesting to see how well it mimicked the initial chord chart input.

ChatGPT Guitar Tablature

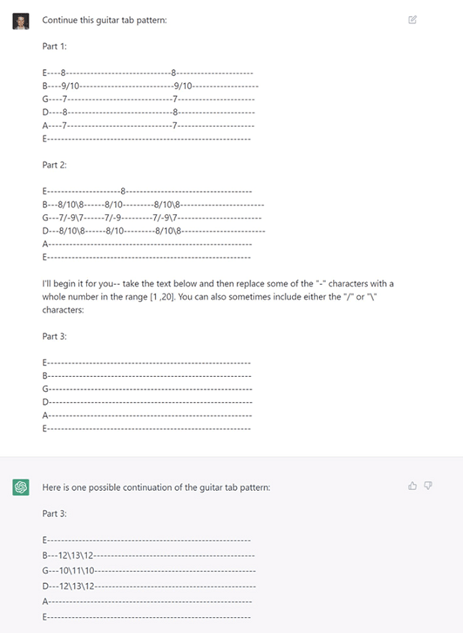

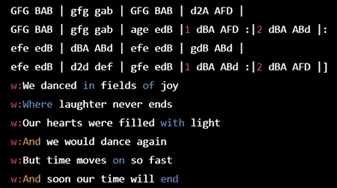

Guitar tabs are another interesting example of how ChatGPT could be used to iterate on existing music. Emanuel wrote “Continue this guitar pattern” and provided notation that any guitarist will recognize from sites like Ultimate-Guitar.

Once again, the AI has revealed its ignorance by using the same slash symbol for both the ascending and descending chord. Guitar tabs use the ‘/’ character to indicate a slide up and ‘\’ to slide down to a note. The first chord goes up the fretboard to the second chord, but uses the wrong kind of slash.

What we’re seeing is an imitation of guitar tablature rather than actual musical thought. But again, this is the first time AI has been able to respond to prompts for creating tablature. It could still be a fun randomization tool if you’re feeling experimental.

ChatGPT Lyric Generation

Similar efforts were made to combine text-based chord charts with AI lyric generation. The output was visually convincing but Twitter users responded to Emanuel’s thread, complaining that they had attempted to sing the melodies with the lyrics but it didn’t sound good.

MuseNet and Jukebox (GPT-2 & GPT-3)

So if ChatGPT doesn’t really understand music or generate meaningful content, can we expect to see meaningful improvements to AI music? The short answer is yes – I predict that Spotify will take the lead on this.

If you already know about MuseNet and Jukebox, you can skip forward to the Spotify section. Otherwise, let’s review two of the biggest AI music and melody generator tools to date.

MuseNet was published in April 2019, powered by a deep neural network that trained on a large MIDI dataset. Users select a set of parameters and submit their request. MuseNet simply runs an API call to their server and returns a set of variations based on your initial input. The process takes about one minute.

The author of MuseNet’s paper compared the architecture to GPT-2, a generative pre-training transformer model owned by OpenAI, that continues to evolve and become more powerful every year. A pre-trained model uses deep learning to predict what kind of musical phrases should follow the initial input.

Jukebox diagram for audio generation

Jukebox launched one year after MuseNet, in April 2020, this time upon the architecture of GPT-3. Their app generated music through a novel process of encoding, embedding and then running a decoder, in an effort to produce higher quality audio. Jukebox’s audio exports were a major improvement on the MIDI files of MuseNet, but only the most abrasive genres of music (death metal, etc) could be captured accurately.

Python developers can access OpenAI’s API and improve on the basics of tools like MuseNet. Instructions for doing so can be found on their Github portfolio. A great example of this is MuseTree, an independently developed tool that provides a more robust interface for managing music generated by MuseNet.

MuseTree interface

OpenAI.com did not release a new music generator tool in 2021 or 2022, but machine learning researchers have continued to publish articles on music composition and deep learning the topic through websites like Arxiv.

OpenAI’s GPT-4 is currently slated for release in 2023, which begs the question; will GPT-4 be capable of music composition or will it remain focused entirely on text?

Meta was expected to release a resource comparable to GPT-4 called Galactica, but initial demos were harshly criticized.

It’s possible that GPT-4, or any other large language model, could be used to generate music compositions. Natural language processing is designed to process and generate text. Music can be represented as a sequence of symbols that can be processed in a similar way.

That being said, generating high-quality music is a complex task that involves a deep understanding of musical structure, theory, and aesthetics. It’s still unclear whether a language model like GPT-4 will have the necessary knowledge and expertise to accomplish this.

Spotify: The More Likely Candidate

We’ve previously written about Spotify AI music tools. One of their most impressive tools, called Basic Pitch, lets users upload any song and transcribe it to MIDI in under a minute. These MIDI files can then be exported from the site for free, granting unprecedented access to raw musical material.

Spotify’s near unlimited GPU power, massive database of audio, and deep audience metrics position them as the most likely player in the AI music space moving forward. This could explain why companies like Meta and OpenAI are more interested in pursuing text and image generation.

Soundtrap, a music producer DAW by Spotify, would likely manage the technical side of these API calls and monetize the use of the music generator. This would allow them to collect money not only for the initial purchase of the audio workstation, but for rendering additional musical contents.

We’ve previously covered AI mixing and mastering tools like Spleeter and SongMastr, that can detect the mix of an existing track and attempt to apply it to a song. It’s easy to imagine how the music generator services provided by Spotify would be coupled with a mixing tool, to cover all bases.

This means that career musicians are safe in the short term, but would be advised to focus on building human connections and networking with people rather than shutting themselves up in a studio. Longterm, our roles as musicians and music producers may shift toward feeding prompts into artificial intelligence and managing its output, to meet specifications of people who need the music.